OpenClaw vs ChatGPT vs Claude API: An Honest Enterprise Comparison

ChatGPT, Claude API, or self-hosted OpenClaw? BOTUM compares all three without bias — so IT directors and CTOs can choose with the right criteria. Comparison table, enterprise scenarios, and concrete recommendations.

The question every CTO eventually asks: "Why not just use ChatGPT?" The honest answer: it depends. This post compares three real tools without bias — so you can choose with the right criteria.

1. The Real Question Behind "Why Not ChatGPT?"

When an IT director asks this question, they're not really asking "Is ChatGPT bad?" They're asking: is the gain in control and flexibility worth the infrastructure investment?

The honest answer: sometimes yes, sometimes no. It depends on the use case, regulatory constraints, and the volume and sensitivity of the data being handled.

This comparison doesn't aim to declare an absolute winner. It maps the contours of three distinct tools — their real strengths and real limitations — so decision-makers can choose with the right criteria.

2. The Three Profiles

ChatGPT / GPT-4 — The SaaS Generalist

ChatGPT is a conversational interface hosted by OpenAI. It's the tool that democratized generative AI for the general public. Its primary strength: accessibility. No infrastructure to manage, no configuration. Open a browser, ask a question, get an answer.

Strengths:

- Zero adoption friction — usable in 2 minutes

- Intuitive interface familiar to non-technical teams

- GPT-4 remains one of the most capable models for writing, summarization, and general-purpose coding

- Native integrations (Microsoft 365 Copilot, plugins, API)

- Rapidly evolving plugins and features

Limitations:

- No persistent memory by default (each conversation starts from scratch)

- Data sent to OpenAI's infrastructure — problematic for sensitive data

- No autonomous access to your environment (files, APIs, shell commands)

- SaaS cost that accumulates at organizational scale

- Model behavior can change between updates

Ideal use cases: brainstorming, quick writing, non-sensitive internal Q&A, first-level support, ad hoc exploration.

Claude API (Anthropic Direct) — The Raw API

Claude is Anthropic's model, recognized for its ability to process long documents and its constitutional alignment. The direct API targets technical teams who want to integrate an LLM into their applications — without a frontend interface.

Strengths:

- Very long context window (up to 200K tokens on Claude 3) — ideal for large documents

- Recognized reasoning quality, particularly on complex analytical tasks

- Per-use pricing (tokens) — predictable for controlled volumes

- Fine-grained parameter control via API (temperature, system prompt, stop sequences)

- Available in multiple AWS Bedrock regions for compliance

Limitations:

- Raw API — requires a development team for integration

- No native agent orchestration — you need to build the logic around it

- Data transmitted to Anthropic (or AWS depending on region)

- No user interface — UX is entirely your responsibility

- No native persistent memory — must be implemented at the application layer

Ideal use cases: long document analysis, integration into existing business applications, cases where reasoning quality outweighs speed.

OpenClaw (Self-Hosted + LLM of Choice) — The Enterprise Runtime

OpenClaw is not an LLM. It's a self-hosted AI agent runtime — a platform that orchestrates autonomous agents within your infrastructure. It's LLM-agnostic: you connect your model of choice (Claude, GPT-4, Gemini, or a local model via Ollama).

Strengths:

- Self-hosted — your data stays within your network

- LLM-agnostic — choose and switch models without refactoring

- Native persistent memory — versioned Git workspace between sessions

- Multi-agent — network of specialized agents with communication protocols

- Real system access — files, shell, APIs, browser, Docker

- Modular skills — email, calendar, GitHub, billing...

- Controlled cost — you only pay for LLM tokens consumed

Limitations:

- Initial learning curve — configuration required

- Requires infrastructure (VM, server) and DevOps competencies

- No out-of-the-box graphical interface — interaction via messaging

- Quality depends on the chosen LLM — OpenClaw doesn't replace a good model

Ideal use cases: sensitive operations automation, multi-domain agent networks, organizations with data sovereignty constraints, environments where vendor lock-in is unacceptable.

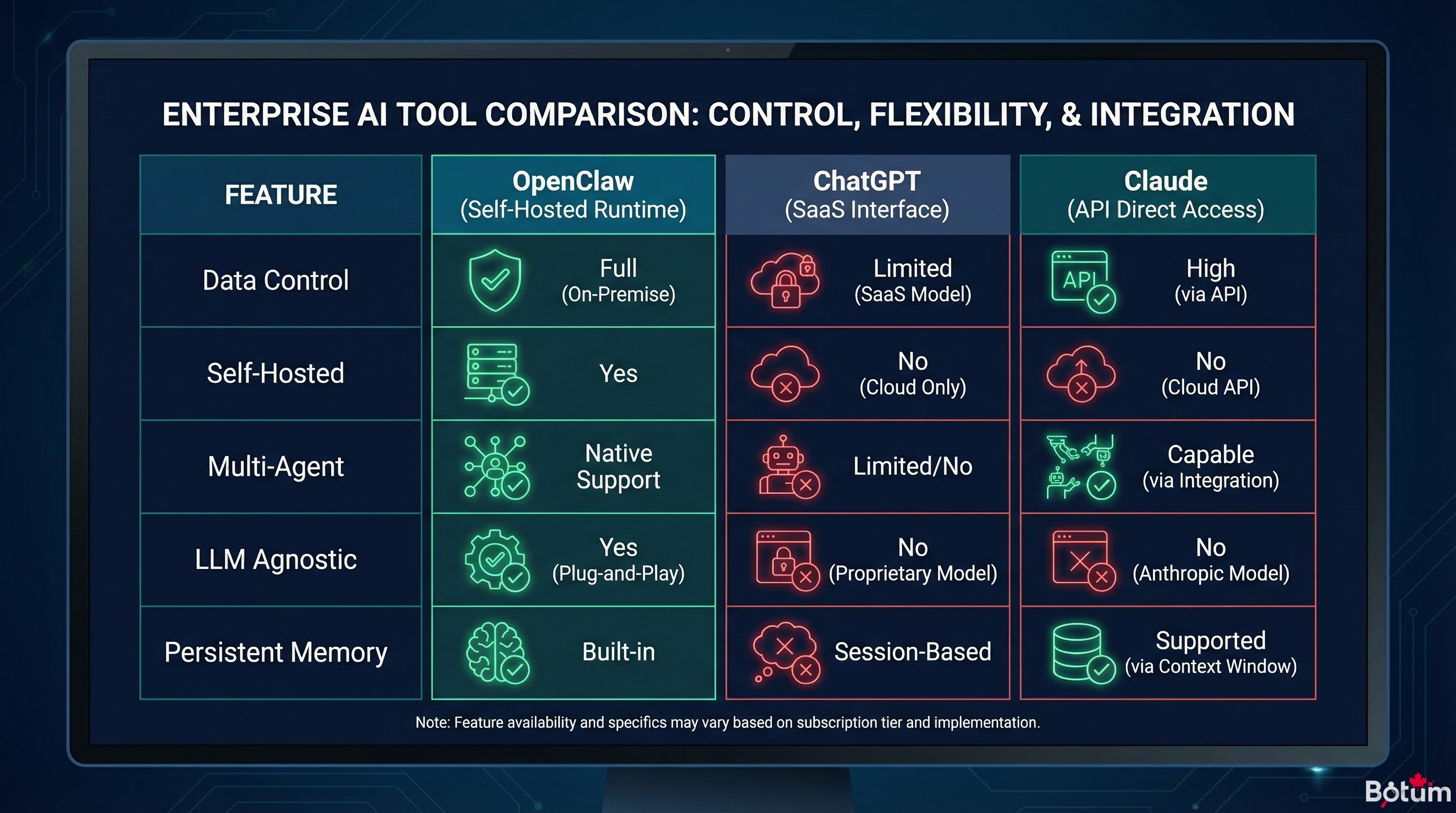

3. Comparison Table

| Criterion | ChatGPT | Claude API | OpenClaw |

|---|---|---|---|

| Data control | ❌ SaaS OpenAI | ⚠️ Anthropic API | ✅ Self-hosted |

| Self-hosted | ❌ | ❌ | ✅ |

| LLM-agnostic | ❌ GPT only | ❌ Claude only | ✅ Your choice |

| Persistent memory | ⚠️ Limited | ❌ Must implement | ✅ Native (Git) |

| Multi-agent | ❌ | ⚠️ Custom dev | ✅ Native |

| System access | ❌ | ❌ | ✅ Shell, files, APIs |

| Ease of adoption | ✅ Immediate | ⚠️ Dev required | ⚠️ Config required |

| Cost at scale | ⚠️ Subscription | ✅ Token usage | ✅ Tokens + infra |

| Native integrations | ✅ Microsoft 365, plugins | ⚠️ Custom API | ✅ Modular skills |

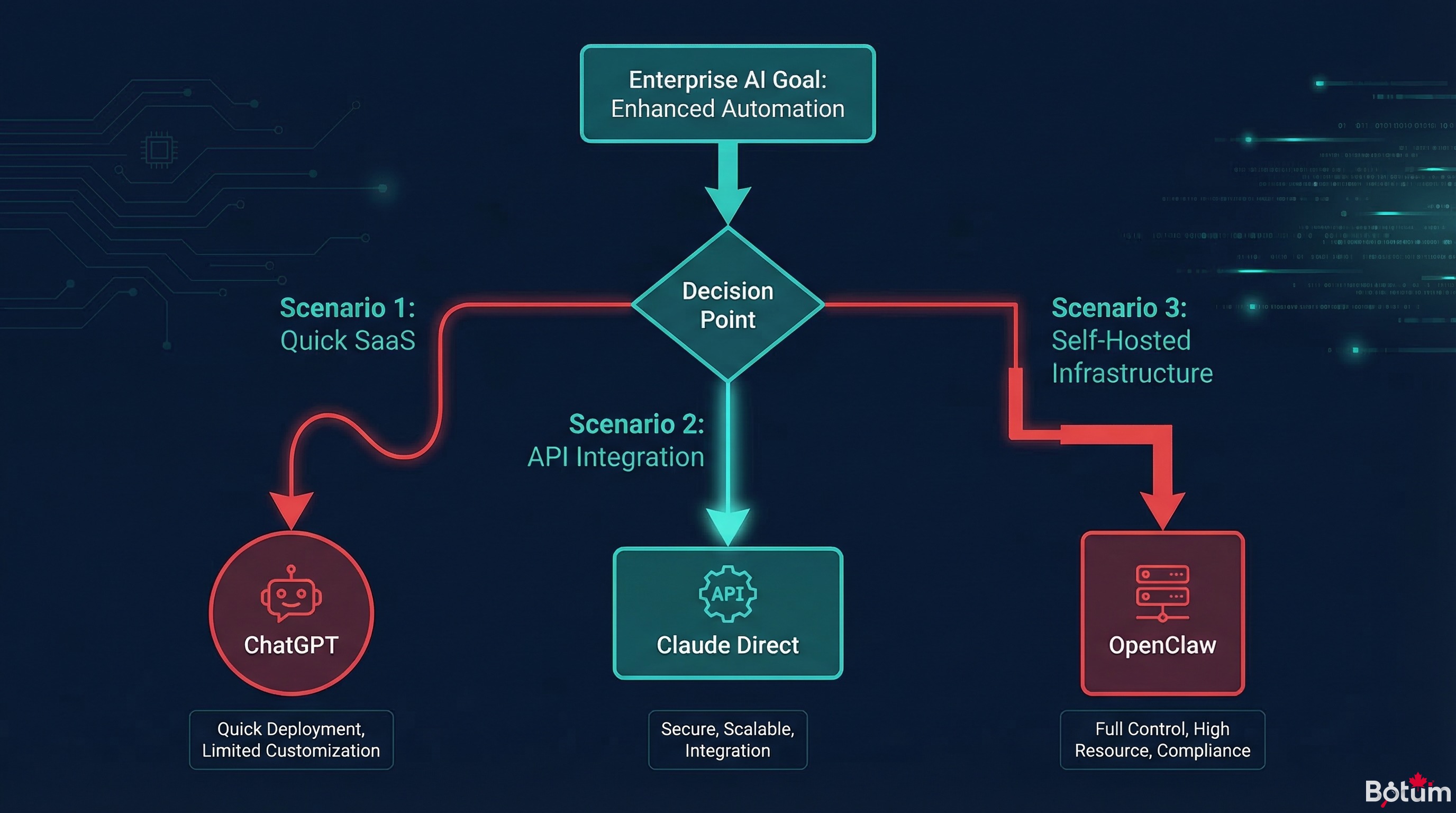

4. When to Choose What — 3 Concrete Scenarios

Scenario 1: 50-person SMB, non-technical team, needs writing assistance and summarization

Recommendation: ChatGPT Enterprise

Adoption is immediate, the interface is familiar, and the needs don't justify the overhead of dedicated infrastructure. Data being processed is low-sensitivity (writing, internal summaries). Per-user cost is predictable. This is the right tool for this profile.

Scenario 2: Consulting firm, analysis of large contracts, integration into existing workflow

Recommendation: Claude API

Claude's long context window is a concrete advantage for large legal or financial documents. The technical team can integrate the API into their document processing workflow. Data isn't ultra-sensitive but requires a level of control that consumer SaaS doesn't guarantee.

Scenario 3: Tech scale-up, internal operations automation (email, billing, monitoring), sensitive client data

Recommendation: OpenClaw self-hosted

Real system access, persistent memory, and multi-agent capability are required. Client data cannot leave the infrastructure. LLM-agnostic architecture allows cost/performance optimization per task. The initial configuration investment is offset by durable automation.

5. The Enterprise Case — Why Self-Hosted Wins for Sensitive Use Cases

For organizations with strong regulatory constraints (strict GDPR, financial sector, healthcare, legal), the choice isn't always about preference — it's about compliance.

When an AI agent accesses client data, contractual documents, production logs, or system credentials, the question "where does this data transit?" is not optional. The answer "on an American provider's servers" can be a regulatory blocker or simply a risk the CISO cannot accept.

Self-hosted OpenClaw solves this structurally: only the prompts (question + minimal context) leave your network toward the LLM provider. Source data stays on your infrastructure. And if you use a local model via Ollama — nothing leaves at all.

This is a fundamental architectural difference, not a matter of trust in SaaS vendors.

6. The Real Question — It's Not "Which Is Better"

The "ChatGPT vs Claude vs OpenClaw" debate is often poorly framed. These aren't three competitors fighting for the same market — they're three types of tools addressing different needs.

ChatGPT is an interface tool. Claude API is an integration component. OpenClaw is an automation platform.

In practice, these three tools can coexist in the same organization:

- ChatGPT for teams' day-to-day ad hoc needs

- Claude API integrated into business applications that process documents

- OpenClaw orchestrating automated operations with Claude as the primary model

The question isn't "which to choose" — it's "for which use case, with what constraints."

🚀 Go Further with BOTUM

These comparisons intentionally simplify reality. In production, AI tooling choices depend on your stack, regulatory constraints, and data flows. BOTUM teams have deployed these architectures in real enterprise environments — and know the pitfalls that benchmarks don't show.

Discuss your project →Conclusion

Choosing between ChatGPT, Claude API, and OpenClaw starts with answering three questions: Where is my data? How much autonomy do I need? Who maintains the infrastructure?

If the answers are "with a third party, I don't mind," "just a Q&A interface," and "nobody, I want zero ops" — ChatGPT. If it's "I'm building an application" and "Claude works for me" — the direct API. If it's "on my premises," "full system access," and "I have a technical team" — OpenClaw.

→ Post 7 coming soon: OpenClaw + DeepSeek — deploying a high-performance local model in an enterprise agent network.

📄 Download this post as PDF

Offline version of this comparison — includes the full table and use case scenarios.

Download PDF →