OpenClaw Hub: 10 Guides to Deploy Your AI Agent Network

Central hub of the OpenClaw series: 10 guides to understand, install, secure, and operate a self-hosted AI agent network in production. From installation to RAG — complete journey documented by BOTUM.

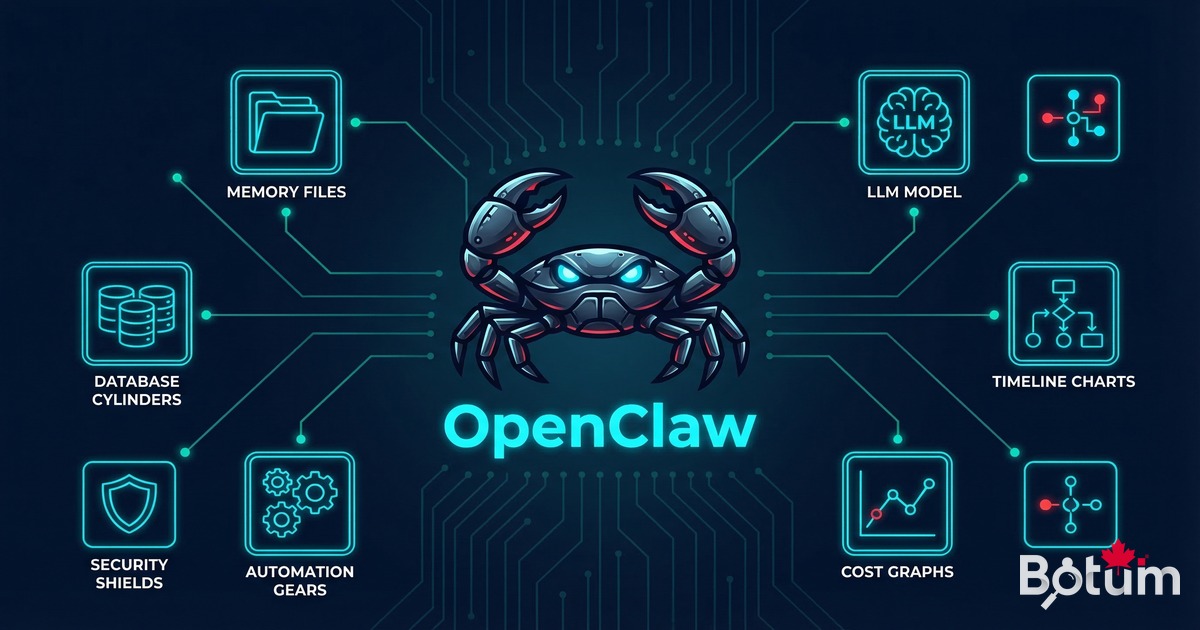

OpenClaw is not another conversational assistant. It's a self-hosted AI agent runtime — a platform that transforms an isolated assistant into a team of autonomous agents operating on your infrastructure. This series of 10 guides documents how to deploy, secure, and operate this type of architecture in production.

What OpenClaw Is — And Why It Changes Everything

Most organizations begin their AI journey with conversational interfaces: ChatGPT, Claude, Gemini. These tools excel at on-demand assistance — drafting an email, getting an explanation, debugging code. But they remain fundamentally stateless: no persistent memory, no access to infrastructure, no autonomous action capability within the organization's environment.

OpenClaw operates on a radically different model. The agent has a persistent local workspace — a versioned directory that serves as its working space, memory, and registry of all its actions. It can read and write files, execute system commands, interact with APIs, control a browser, manage calendars and emails. It receives instructions through the team's messaging app and acts within the real infrastructure.

The conceptual distinction is fundamental: you don't consult OpenClaw, you delegate responsibilities to it.

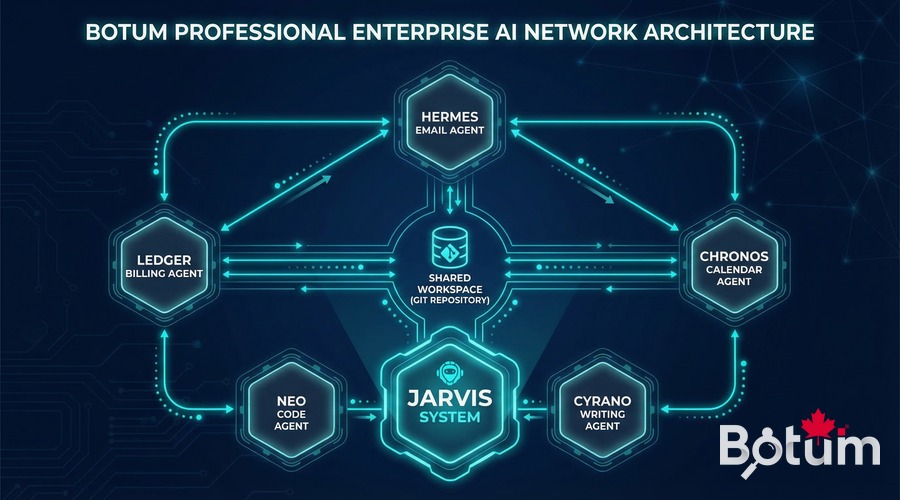

And when you move from a single agent to a network of specialized agents — each with a defined scope, an identity, rules specific to it — you get something that looks more like an organization than a tool: 24/7 operational continuity, native Git traceability, composable automation.

The 10-Step Progression

This series was designed as a structured learning path: each guide builds on the previous ones, from the most conceptual to the most technical. An IT professional can go through the entire series in a few hours and come out with a complete operational understanding — or select the modules relevant to their situation.

The path follows a construction logic:

- Steps 1-2: Understand the ecosystem and lay the foundations (installation, workspace, first skills)

- Steps 3-4: Secure the architecture before going further (authentication, SSL, secrets vault)

- Steps 5-6: Configure agents and evaluate available LLM models

- Steps 7-8: Extend with local LLMs and automate operations

- Steps 9-10: Advanced architectures — long-term memory and vector databases (RAG)

What the Series Covers

Here is the detailed content of each of the 10 guides:

Guide 1 — Agent Network: Field Report

The OpenClaw concept from scratch: why self-hosted, how the persistent workspace works, and why moving from a single agent to a network fundamentally changes the relationship to automation. Field report from the BOTUM deployment.

Guide 2 — Installation & Workspace

Complete installation of OpenClaw on a Linux VM, workspace configuration, activating first skills, and deploying a first operational agent in under 30 minutes. Every step documented.

Guide 3 — Securing in Production

An AI agent's attack surface is real. This guide covers authentication (authorized senders), HTTPS reverse proxy with Nginx and Let's Encrypt, network isolation, sandboxing, and the complete production security checklist.

Guide 4 — Secrets & Vault

An AI agent with access to your credentials represents a non-trivial risk. This guide documents how to structure SOUL.md and agent files to never expose secrets in the AI context, and how to integrate a secrets vault.

Guide 5 — Configuring First Agents

How to create and configure specialized agents with distinct identities: JARVIS (system), HERMÈS (email), CHRONOS (calendar). Shared workspace architecture, inter-agent communication protocols, task queues.

Guide 6 — OpenClaw vs ChatGPT vs Claude API

Structured comparison of approaches: direct LLM API, SaaS interfaces, and self-hosted OpenClaw. Decision criteria based on constraints (privacy, cost, control, scalability). When each approach makes sense.

Guide 7 — DeepSeek & Local LLM

Deploying a local LLM with Ollama and DeepSeek. Advantages (zero inference cost, absolute privacy), constraints (RAM, GPU), optimal use cases. Smart routing between LLMs based on task complexity.

Guide 8 — Automating Operations

Crons, triggers, task queues, escalations. How to orchestrate an agent network on complex workflows: daily reports, monitoring, alerts, multi-step pipelines. Proven automation patterns from production.

Guide 9 — Memory & Context

Memory management in a long-running agent context: memory.md, context compactions, hierarchical memory (HOT/WARM/COLD), shared sessions between agents. How to preserve continuity over weeks of operation.

Guide 10 — Databases & RAG

Connecting OpenClaw to PostgreSQL, pgvector, and building a RAG pipeline (Retrieval-Augmented Generation). Semantic search over internal documentation, emails, contracts. The agent that "knows" what's in your data.

📚 The 10 OpenClaw Series Guides

Upcoming Series Announced

The OpenClaw series is the first module of a broader program that BOTUM is publishing in 2026 on self-hosted AI architectures and enterprise infrastructure. The following series are in development:

- AI Insights Series — Technology monitoring, model analysis, comparative benchmarks. For teams that need to choose their AI tools with objective criteria rather than marketing.

- VMware → Alternatives Series — Post-Broadcom migration: Proxmox, OpenStack, Harvester. Field reports from real migrations, pitfalls to avoid, decision criteria for CIOs.

- Infrastructure Security Series — Zero trust, hardening, open-source SIEM, advanced OPNsense. Secure infrastructure as a prerequisite for any serious AI deployment.

- Enterprise Kubernetes Series — Container orchestration in production, GitOps, observability, cloud cost management.

These series are accessible via the main BOTUM blog page. Notifications for new publications are available via the newsletter form.

🚀 Deploy OpenClaw in Production with BOTUM

These 10 guides cover the essentials. In production, every AI agent deployment has its constraints — sizing, security, memory management, business integrations. BOTUM teams guide organizations from audit to production.

Talk to a BOTUM Expert →📄 Download the PDF Guide — OpenClaw Series (EN)

Complete series summary: architecture, progression, key points from each guide.

Download PDF →