Business Continuity and Kubernetes: Cloud Resilience for Canadian SMEs

Final post of the Cloud Journey series. Cloud BCP, RTO/RPO, Kubernetes auto-healing, Velero, ArgoCD, and Cloudflare failover — the complete resilience stack for Canadian SMEs.

Here's the question nobody asks before migrating to the cloud. Not the CTO, not the DevOps lead, and certainly not the consultant selling you digital transformation. Here it is: "If our cloud infrastructure goes completely down tonight at 11 PM, how many hours before our customers can get back to work?"

Most Canadian SMEs that have moved to the cloud can't answer this clearly. They know their data is "somewhere on AWS" or "in Azure." They might have automatic snapshots. But a tested, documented Business Continuity Plan (BCP) with quantified recovery objectives? Rarely.

This post — the final entry in our Cloud Journey series — is the most critical of all. Because everything we've built together since B01 can collapse if you don't have a solid BCP. Infrastructure as code, Zero Trust security, multi-cloud, FinOps — all of it becomes moot if a major outage takes you offline for 48 hours.

Cloud BCP: RTO and RPO — defining your thresholds by business impact

Before talking technology, two fundamental metrics every technical leader must know by heart:

RTO — Recovery Time Objective: The maximum acceptable time between the start of an outage and full service restoration. If your RTO is 4 hours, your infrastructure must be able to recover in under 4 hours, no matter what. Exceeding this threshold has measurable consequences: lost revenue, contractual penalties, reputational damage.

RPO — Recovery Point Objective: The maximum amount of data you can afford to lose, expressed as time. An RPO of 15 minutes means your backups must be frequent enough that you never lose more than 15 minutes of data. An RPO of 24 hours means an entire day of transactions could disappear.

How do you define these thresholds for your SME? By business impact:

- E-commerce / B2C SaaS: Every minute of interruption = lost revenue. Target RTO: 15-30 min. RPO: 5 min.

- B2B SaaS / Business applications: Customers tolerate short interruptions. Target RTO: 1-4 hours. RPO: 15-30 min.

- Internal tools / Back-office: Interruption is inconvenient but not catastrophic. Target RTO: 4-24 hours. RPO: 1-4 hours.

- Financial services / Healthcare: Regulated. RTO and RPO mandated by sector standards (OSFI, PHIPA). Often < 4h and < 1h respectively.

A classic mistake: setting ambitious RTO/RPO targets without evaluating the infrastructure cost to achieve them. Each 10x reduction in RTO costs approximately 3x more in infrastructure. Choose wisely.

Cloud backup strategies: the cost vs. RTO matrix

There's no universal backup strategy. Here are the main options, from most economical to most robust:

Snapshots + cold backup: Minimal cost. You create regular snapshots of your volumes and databases, stored in S3/Azure Blob. RTO: 4-24 hours (restoration time). RPO: depends on snapshot frequency. Suitable for non-critical environments. Recommended tools: AWS Data Lifecycle Manager, Azure Backup, or Velero for Kubernetes.

Cross-region replication: Your data is replicated in near-real-time to a different geographic region. If ca-central-1 (Canada) goes down, you failover to us-east-1. RTO: 30-120 min. RPO: 1-5 min. Additional cost: cross-region replication fees (typically $0.02/GB). For databases, use RDS Multi-AZ or Azure Database geo-replication.

Warm standby: A reduced but functional infrastructure runs permanently in a secondary region. It receives data continuously but handles little or no traffic. When an outage hits, you scale out this infrastructure and switch DNS. RTO: 15-60 min. RPO: 5-15 min. Cost: 20-40% of your primary infrastructure.

Active-Active multi-region: Two or more regions process traffic simultaneously via a global load balancer. When one region fails, others automatically absorb the traffic. RTO: < 5 min. RPO: < 1 min. Cost: double or triple your infrastructure. Reserved for mission-critical applications.

Kubernetes for resilience: what orchestration actually changes

Kubernetes isn't just a container orchestrator. Properly configured, it's an autonomous resilience system that handles problems you previously spent hours managing manually.

Auto-healing: If a Pod crashes, Kubernetes restarts it automatically. If a Node (VM) goes down, Kubernetes reschedules all its Pods to available Nodes. This behavior is built-in, not a paid feature. For an SME, this means "server crashes" are handled without human intervention, typically in under 2 minutes.

Rolling deployments: Updates deploy progressively, with zero downtime. Kubernetes replaces old Pods one by one, waiting for the new one to be healthy before continuing. If a new Pod fails its health check, the rollout pauses automatically. Result: zero-downtime deployments for your application updates.

Proactive health checks: Define livenessProbe and readinessProbe for each application. The livenessProbe detects if the application is alive (otherwise → automatic restart). The readinessProbe determines if the Pod is ready to receive traffic (otherwise → removed from load balancer). These two mechanisms eliminate the majority of production incidents related to intermediate application states.

Resource requests and limits: Kubernetes guarantees each Pod a minimum of CPU/RAM (requests) and prevents it from consuming beyond a maximum (limits). No more situations where a "runaway" application eats all server resources and crashes everything else. Isolation is at the Linux kernel level via cgroups.

High availability architecture: multi-AZ, load balancing, circuit breaker

High availability isn't a state — it's an architecture. Here are the pillars:

Multi-AZ (Availability Zones): Deploy your Kubernetes workers across at least 2 availability zones in your cloud region. AZs are physically separate datacenters with independent power and networking. An AZ failure (rare but real) doesn't impact your other AZs. Additional cost: near zero, just resource duplication. Configuration: NodeAffinity + PodAntiAffinity in your Kubernetes manifests.

Application load balancing: In front of your K8s cluster, a load balancer distributes incoming traffic. On AWS: ALB (Application Load Balancer) with AWS Load Balancer Controller integration for K8s. On Azure: Azure Application Gateway. On GCP: Cloud Load Balancing. The Ingress Controller (nginx-ingress or Traefik) handles routing within the cluster.

Circuit breaker pattern: Inspired by electronics, this pattern protects your system against cascading failures. If a dependent service (e.g., an external API) starts responding slowly, the circuit breaker "opens" the connection and returns a degraded response instead of accumulating timeouts. Kubernetes implementations: Istio (full service mesh) or Envoy (lighter). For SMEs, the Resilience4j library (Java) or Go's Circuit Breaker offer application-level implementation without additional infrastructure.

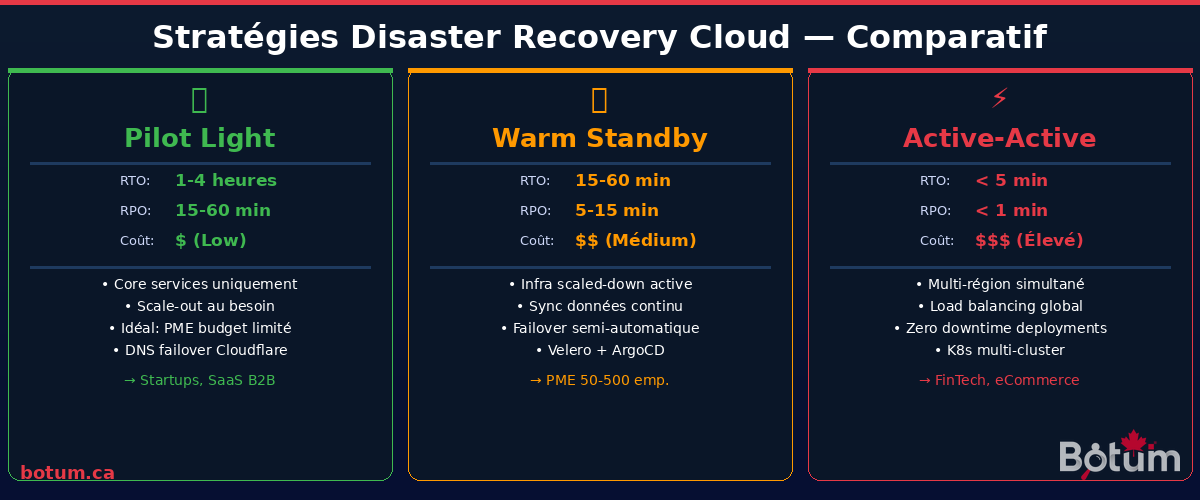

Cloud Disaster Recovery: Pilot Light, Warm Standby, Active-Active

Choosing the right DR strategy means finding the balance between target RTO and available budget:

Pilot Light: Only critical components (replicated database, up-to-date AMI/container images) are maintained in the DR region. In case of disaster, you spin up the rest of the infrastructure via Terraform and redirect DNS. RTO: 1-4 hours. This is the recommended strategy for SMEs with limited DR budgets but who need a real plan. Typical monthly cost: 5-15% of primary infrastructure.

Warm Standby: A reduced but operational version of your infrastructure runs permanently in the DR region. When disaster strikes, you scale this infrastructure to production level and switch traffic. RTO: 15-60 min. Recommended for SMEs with contractual SLAs. Monthly cost: 20-40% of primary infrastructure.

Active-Active: Two or more regions handle production traffic permanently. Failover is automatic and transparent to users. RTO: < 5 min, often < 1 min. Requires compatible application architecture (stateless, distributed data synchronization). Reserved for applications where one minute of interruption costs more than the complete DR infrastructure. Cost: 80-100% additional.

Resilience testing: chaos engineering and fire drills

An untested BCP is fiction. The real question isn't "do we have a plan?" but "have we proven this plan works?"

Chaos engineering: Introduce controlled failures in production (or staging) to validate your system's resilience. Netflix popularized this approach with its Simian Army (Chaos Monkey, Latency Monkey, etc.). For SMEs, LitmusChaos is the most accessible open-source tool: it integrates directly with Kubernetes and can simulate Pod failures, Node failures, network latency, disk errors. Start with simple experiments: kill a random Pod, simulate 200ms latency on a critical service. Observe how your system reacts before a real outage does it for you.

Fire drills: At least once per quarter, simulate a complete disaster scenario. Cut the primary region in your lab (or staging). Time the actual recovery. Document the blockers (the DBA who can no longer access the vault, the failover process requiring undocumented manual intervention). Every fire drill reveals gaps your theoretical documentation didn't see.

Runbooks: Document every recovery procedure as if you had to execute it at 3 AM, exhausted, under pressure. Runbooks should be copyable command lists, not conceptual descriptions. Store them in your git repo (accessible even if your Notion or Confluence is down), and test them during every fire drill.

Compliance and BCP: Canadian regulatory requirements

In Canada, business continuity requirements are not optional in several sectors:

OSFI E-21 (Financial sector): The Office of the Superintendent of Financial Institutions' E-21 guideline imposes explicit operational resilience requirements, including RTO/RPO definition, regular recovery testing, and critical dependency documentation. Federally regulated financial institutions (banks, insurers, credit unions) must demonstrate compliance. Fintechs working with these institutions indirectly inherit these requirements through their contracts.

PHIPA (Ontario healthcare): The Personal Health Information Protection Act requires health information custodians to maintain administrative, technical, and physical security measures to protect data, including continuity plans to ensure health data availability. A health data loss following an outage not covered by a BCP may constitute a breach.

PIPEDA / Law 25 (Federal + Quebec): The Personal Information Protection and Electronic Documents Act, and its Quebec equivalent, impose notification obligations for "security breaches" posing a real risk of significant harm. A cloud incident resulting in data loss could trigger these obligations. Having a documented and tested BCP demonstrates the due diligence required.

Recommended SME stack for Kubernetes BCP

Here's the stack we recommend and use at BOTUM for SMEs running Kubernetes clusters:

Velero (Kubernetes Backup): The reference tool for K8s cluster backups. Velero backs up both Kubernetes resources (Deployments, Services, ConfigMaps, Secrets) AND persistent volumes (PVCs). Supports S3, Azure Blob, GCS as destinations. Typical configuration: hourly backups, 7-day retention, daily snapshots with 30-day retention. Restoring a complete namespace typically takes < 10 minutes.

ArgoCD or Flux (GitOps for DR): Your DR cluster should not be manually configured — it should be synchronized from your git repo via GitOps. If your primary cluster goes down, your DR cluster is already up-to-date with your latest configuration. ArgoCD offers an intuitive UI; Flux is more lightweight. Both integrate with Velero for a complete DR strategy. In case of disaster: 1) Switch DNS to DR cluster. 2) ArgoCD/Flux has already deployed all your applications. 3) Velero restores the data.

Cloudflare (DNS Failover): Cloudflare Load Balancing with health checks enables automatic DNS failover from your primary region to your DR region as soon as a health check fails. TTL configurable to 30 seconds for rapid propagation. The Cloudflare Business plan ($200/month) is sufficient for most SMEs. Cheaper alternative: Route 53 Health Checks on AWS.

Monitoring and alerting: Prometheus + Alertmanager for K8s metrics, with PagerDuty or OpsGenie alerts for on-call. Define SLOs (Service Level Objectives) in Prometheus and alert when approaching the threshold (e.g., alert at 99.9% availability rather than waiting for the outage at 99.5%).

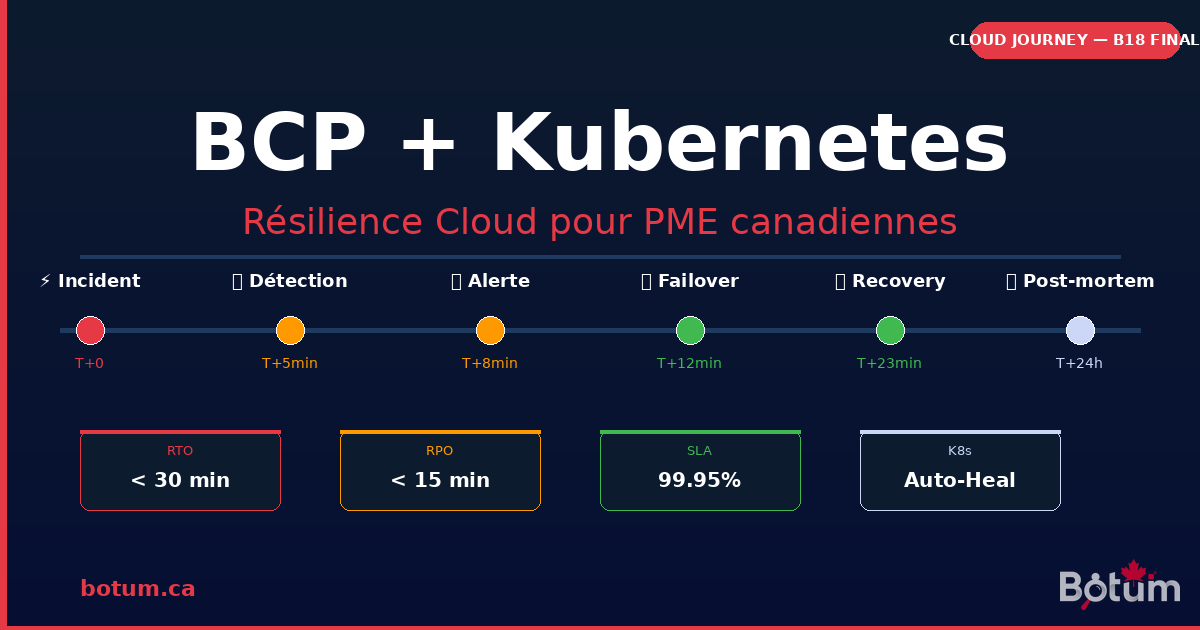

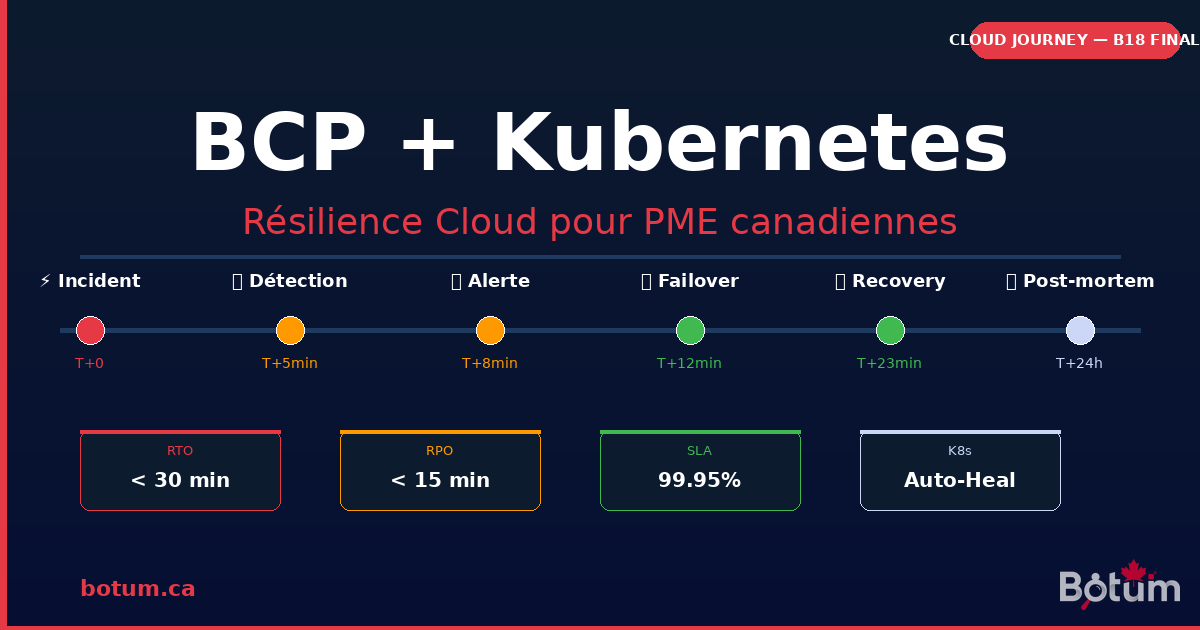

Real BOTUM case: prod incident, RTO achieved in 23 minutes

In November 2024, our production K8s cluster at BOTUM suffered a major outage following a poorly orchestrated node update that corrupted etcd. At 2:37 PM, application services started returning 503 errors. At 2:41 PM, the Alertmanager alert fired. The team was notified via PagerDuty.

What happened:

- T+0: Automatic detection via Alertmanager (etcd cluster unhealthy)

- T+4min: On-call engineer confirms etcd corruption via kubectl

- T+8min: Decision to failover to DR cluster (Warm Standby)

- T+10min: Cloudflare DNS failover triggered (TTL 30s)

- T+12min: ArgoCD on DR cluster confirms all apps deployed and healthy

- T+18min: Velero restore of latest data (RPO: 8 minutes of data lost)

- T+23min: 100% traffic on DR cluster, services operational

What saved us: The DR cluster was already synchronized via ArgoCD from the same git repo as the primary cluster. We had nothing to reconfigure. Velero had taken a snapshot 8 minutes before the incident. The failover runbook had been tested during September's fire drill — the engineer knew the commands by heart.

What we could have done better: Our pre-failure alerts on etcd state should have triggered intervention before complete corruption. Since then, we've added alerts on etcd_server_has_leader and etcd_disk_wal_fsync_duration metrics. The next similar outage will be detected 20 minutes earlier.

Incident cost: 23 minutes of partial downtime. No customer data loss (RPO achieved). Business impact: negligible. Without the BCP: estimated 4-6 hours of complete downtime, potential data loss, and an all-nighter for the entire team.

Conclusion: Cloud Journey — series finale, beginning of resilience

We've reached the end of 18 posts covering the complete cloud journey of a Canadian SME: from the first migration (B01) to total operational resilience (B18). We've covered infrastructure as code, FinOps, Zero Trust security, multi-cloud, and now business continuity.

If you take away one thing from this final post: resilience isn't a state you achieve, it's a practice you maintain. An untested BCP is not a BCP. A Kubernetes cluster without health checks is not high availability. A DR plan without regular fire drills is an illusion of security.

Start where you are. If you don't have Velero, install it this week. If you don't have runbooks, write one for your most likely scenario. If you've never done a fire drill, schedule one for next month. Resilience is built step by step, not in a single night.

Thank you for following the Cloud Journey series. The BOTUM team is here to support you through each of these steps — from architecture to deployment, from compliance to resilience testing.

🚀 Build your cloud BCP with BOTUM

Business continuity planning, resilient architecture, managed Kubernetes — BOTUM teams support you from design to implementation.

Discuss your project →Download this BCP + Kubernetes guide as a PDF.

⬇ Download the guide (PDF)